Compression Algorithm on:

[Wikipedia]

[Google]

[Amazon]

In

In the late 1980s, digital images became more common, and standards for lossless

In the late 1980s, digital images became more common, and standards for lossless

Data compression can be viewed as a special case of data differencing. Data differencing consists of producing a ''difference'' given a ''source'' and a ''target,'' with patching reproducing the ''target'' given a ''source'' and a ''difference.'' Since there is no separate source and target in data compression, one can consider data compression as data differencing with empty source data, the compressed file corresponding to a difference from nothing. This is the same as considering absolute

Data compression can be viewed as a special case of data differencing. Data differencing consists of producing a ''difference'' given a ''source'' and a ''target,'' with patching reproducing the ''target'' given a ''source'' and a ''difference.'' Since there is no separate source and target in data compression, one can consider data compression as data differencing with empty source data, the compressed file corresponding to a difference from nothing. This is the same as considering absolute

Lossy audio compression is used in a wide range of applications. In addition to standalone audio-only applications of file playback in MP3 players or computers, digitally compressed audio streams are used in most video DVDs, digital television, streaming media on the

Lossy audio compression is used in a wide range of applications. In addition to standalone audio-only applications of file playback in MP3 players or computers, digitally compressed audio streams are used in most video DVDs, digital television, streaming media on the

Early audio research was conducted at

Early audio research was conducted at

Many commonly used video compression methods (e.g., those in standards approved by the

Many commonly used video compression methods (e.g., those in standards approved by the

EBU subjective listening tests on low-bitrate audio codecs

Audio Archiving Guide: Music Formats

(Guide for helping a user pick out the right codec) *

hydrogenaudio wiki comparison

Introduction to Data Compression

by Guy E Blelloch from CMU

Explanation of lossless signal compression method used by most codecs

* *

{{Authority control Digital audio Digital television Film and video technology Video compression Videotelephony Utility software types

information theory

Information theory is the mathematical study of the quantification (science), quantification, Data storage, storage, and telecommunications, communication of information. The field was established and formalized by Claude Shannon in the 1940s, ...

, data compression, source coding, or bit-rate reduction is the process of encoding information

Information is an Abstraction, abstract concept that refers to something which has the power Communication, to inform. At the most fundamental level, it pertains to the Interpretation (philosophy), interpretation (perhaps Interpretation (log ...

using fewer bits than the original representation. Any particular compression is either lossy

In information technology, lossy compression or irreversible compression is the class of data compression methods that uses inexact approximations and partial data discarding to represent the content. These techniques are used to reduce data size ...

or lossless

Lossless compression is a class of data compression that allows the original data to be perfectly reconstructed from the compressed data with no loss of information. Lossless compression is possible because most real-world data exhibits statisti ...

. Lossless compression reduces bits by identifying and eliminating statistical redundancy. No information is lost in lossless compression. Lossy compression reduces bits by removing unnecessary or less important information. Typically, a device that performs data compression is referred to as an encoder, and one that performs the reversal of the process (decompression) as a decoder.

The process of reducing the size of a data file

A data file is a computer file which stores data to be used by a computer application or system, including input and output data. A data file usually does not contain instructions or code to be executed (that is, a computer program).

Most of th ...

is often referred to as data compression. In the context of data transmission

Data communication, including data transmission and data reception, is the transfer of data, signal transmission, transmitted and received over a Point-to-point (telecommunications), point-to-point or point-to-multipoint communication chann ...

, it is called source coding: encoding is done at the source of the data before it is stored or transmitted. Source coding should not be confused with channel coding

In computing, telecommunication, information theory, and coding theory, forward error correction (FEC) or channel coding is a technique used for error control, controlling errors in data transmission over unreliable or noisy communication channel ...

, for error detection and correction or line coding

In telecommunications, a line code is a pattern of voltage, current, or photons used to represent digital data transmitted down a communication channel or written to a storage medium. This repertoire of signals is usually called a constrained ...

, the means for mapping data onto a signal.

Data Compression algorithms present a space-time complexity trade-off between the bytes needed to store or transmit information, and the Computational resources needed to perform the encoding and decoding. The design of data compression schemes involves balancing the degree of compression, the amount of distortion introduced (when using lossy data compression

In information technology, lossy compression or irreversible compression is the class of data compression methods that uses inexact approximations and partial data discarding to represent the content. These techniques are used to reduce data size ...

), and the computational resources or time required to compress and decompress the data.

Lossless

Lossless data compression

Lossless compression is a class of data compression that allows the original data to be perfectly reconstructed from the compressed data with no loss of information. Lossless compression is possible because most real-world data exhibits Redundanc ...

algorithm

In mathematics and computer science, an algorithm () is a finite sequence of Rigour#Mathematics, mathematically rigorous instructions, typically used to solve a class of specific Computational problem, problems or to perform a computation. Algo ...

s usually exploit statistical redundancy to represent data without losing any information

Information is an Abstraction, abstract concept that refers to something which has the power Communication, to inform. At the most fundamental level, it pertains to the Interpretation (philosophy), interpretation (perhaps Interpretation (log ...

, so that the process is reversible. Lossless compression is possible because most real-world data exhibits statistical redundancy. For example, an image may have areas of color that do not change over several pixels; instead of coding "red pixel, red pixel, ..." the data may be encoded as "279 red pixels". This is a basic example of run-length encoding

Run-length encoding (RLE) is a form of lossless data compression in which ''runs'' of data (consecutive occurrences of the same data value) are stored as a single occurrence of that data value and a count of its consecutive occurrences, rather th ...

; there are many schemes to reduce file size by eliminating redundancy.

The Lempel–Ziv (LZ) compression methods are among the most popular algorithms for lossless storage. DEFLATE is a variation on LZ optimized for decompression speed and compression ratio, but compression can be slow. In the mid-1980s, following work by Terry Welch, the Lempel–Ziv–Welch

Lempel–Ziv–Welch (LZW) is a universal lossless data compression algorithm created by Abraham Lempel, Jacob Ziv, and Terry Welch. It was published by Welch in 1984 as an improved implementation of the LZ78 algorithm published by Lem ...

(LZW) algorithm rapidly became the method of choice for most general-purpose compression systems. LZW is used in GIF

The Graphics Interchange Format (GIF; or , ) is a Raster graphics, bitmap Image file formats, image format that was developed by a team at the online services provider CompuServe led by American computer scientist Steve Wilhite and released ...

images, programs such as PKZIP

PKZIP is a file archiving computer program

A computer program is a sequence or set of instructions in a programming language for a computer to Execution (computing), execute. It is one component of software, which also includes softwar ...

, and hardware devices such as modems. LZ methods use a table-based compression model where table entries are substituted for repeated strings of data. For most LZ methods, this table is generated dynamically from earlier data in the input. The table itself is often Huffman encoded. Grammar-based codes

Grammar-based codes or grammar-based compression are Data compression, compression algorithms based on the idea of constructing a context-free grammar (CFG) for the string to be compressed. Examples include universal lossless data compression algo ...

like this can compress highly repetitive input extremely effectively, for instance, a biological data collection

Data collection or data gathering is the process of gathering and measuring information on targeted variables in an established system, which then enables one to answer relevant questions and evaluate outcomes. Data collection is a research com ...

of the same or closely related species, a huge versioned document collection, internet archival, etc. The basic task of grammar-based codes is constructing a context-free grammar deriving a single string. Other practical grammar compression algorithms include Sequitur and Re-Pair

Re-Pair (short for recursive pairing) is a grammar-based compression algorithm that, given an input text, builds a straight-line program, i.e. a context-free grammar

In formal language theory, a context-free grammar (CFG) is a formal gramm ...

.

The strongest modern lossless compressors use probabilistic

Probability is a branch of mathematics and statistics concerning events and numerical descriptions of how likely they are to occur. The probability of an event is a number between 0 and 1; the larger the probability, the more likely an e ...

models, such as prediction by partial matching

Prediction by partial matching (PPM) is an adaptive statistical data compression technique based on context modeling and prediction. PPM models use a set of previous symbols in the uncompressed symbol stream to predict the next symbol in the str ...

. The Burrows–Wheeler transform

The Burrows–Wheeler transform (BWT) rearranges a character string into runs of similar characters, in a manner that can be reversed to recover the original string. Since compression techniques such as move-to-front transform and run-length enc ...

can also be viewed as an indirect form of statistical modelling. In a further refinement of the direct use of probabilistic model

A statistical model is a mathematical model that embodies a set of statistical assumptions concerning the generation of sample data (and similar data from a larger population). A statistical model represents, often in considerably idealized form ...

ling, statistical estimates can be coupled to an algorithm called arithmetic coding

Arithmetic coding (AC) is a form of entropy encoding used in lossless data compression. Normally, a String (computer science), string of characters is represented using a fixed number of bits per character, as in the American Standard Code for In ...

. Arithmetic coding is a more modern coding technique that uses the mathematical calculations of a finite-state machine

A finite-state machine (FSM) or finite-state automaton (FSA, plural: ''automata''), finite automaton, or simply a state machine, is a mathematical model of computation. It is an abstract machine that can be in exactly one of a finite number o ...

to produce a string of encoded bits from a series of input data symbols. It can achieve superior compression compared to other techniques such as the better-known Huffman algorithm. It uses an internal memory state to avoid the need to perform a one-to-one mapping of individual input symbols to distinct representations that use an integer number of bits, and it clears out the internal memory only after encoding the entire string of data symbols. Arithmetic coding applies especially well to adaptive data compression tasks where the statistics vary and are context-dependent, as it can be easily coupled with an adaptive model of the probability distribution

In probability theory and statistics, a probability distribution is a Function (mathematics), function that gives the probabilities of occurrence of possible events for an Experiment (probability theory), experiment. It is a mathematical descri ...

of the input data. An early example of the use of arithmetic coding was in an optional (but not widely used) feature of the JPEG

JPEG ( , short for Joint Photographic Experts Group and sometimes retroactively referred to as JPEG 1) is a commonly used method of lossy compression for digital images, particularly for those images produced by digital photography. The degr ...

image coding standard. It has since been applied in various other designs including H.263, H.264/MPEG-4 AVC and HEVC

High Efficiency Video Coding (HEVC), also known as H.265 and MPEG-H Part 2, is a video compression standard designed as part of the MPEG-H project as a successor to the widely used Advanced Video Coding (AVC, H.264, or MPEG-4 Part 10). In co ...

for video coding.

Archive software typically has the ability to adjust the "dictionary size", where a larger size demands more random-access memory

Random-access memory (RAM; ) is a form of Computer memory, electronic computer memory that can be read and changed in any order, typically used to store working Data (computing), data and machine code. A random-access memory device allows ...

during compression and decompression, but compresses stronger, especially on repeating patterns in files' content.

Lossy

In the late 1980s, digital images became more common, and standards for lossless

In the late 1980s, digital images became more common, and standards for lossless image compression

Image compression is a type of data compression applied to digital images, to reduce their cost for computer data storage, storage or data transmission, transmission. Algorithms may take advantage of visual perception and the statistical properti ...

emerged. In the early 1990s, lossy compression methods began to be widely used. In these schemes, some loss of information is accepted as dropping nonessential detail can save storage space. There is a corresponding trade-off

A trade-off (or tradeoff) is a situational decision that involves diminishing or losing on quality, quantity, or property of a set or design in return for gains in other aspects. In simple terms, a tradeoff is where one thing increases, and anoth ...

between preserving information and reducing size. Lossy data compression schemes are designed by research on how people perceive the data in question. For example, the human eye is more sensitive to subtle variations in luminance

Luminance is a photometric measure of the luminous intensity per unit area of light travelling in a given direction. It describes the amount of light that passes through, is emitted from, or is reflected from a particular area, and falls wit ...

than it is to the variations in color. JPEG image compression works in part by rounding off nonessential bits of information. A number of popular compression formats exploit these perceptual differences, including psychoacoustics

Psychoacoustics is the branch of psychophysics involving the scientific study of the perception of sound by the human auditory system. It is the branch of science studying the psychological responses associated with sound including noise, speech, ...

for sound, and psychovisuals for images and video.

Most forms of lossy compression are based on transform coding, especially the discrete cosine transform

A discrete cosine transform (DCT) expresses a finite sequence of data points in terms of a sum of cosine functions oscillating at different frequency, frequencies. The DCT, first proposed by Nasir Ahmed (engineer), Nasir Ahmed in 1972, is a widely ...

(DCT). It was first proposed in 1972 by Nasir Ahmed, who then developed a working algorithm with T. Natarajan and K. R. Rao in 1973, before introducing it in January 1974. DCT is the most widely used lossy compression method, and is used in multimedia formats for images (such as JPEG and HEIF), video

Video is an Electronics, electronic medium for the recording, copying, playback, broadcasting, and display of moving picture, moving image, visual Media (communication), media. Video was first developed for mechanical television systems, whi ...

(such as MPEG

The Moving Picture Experts Group (MPEG) is an alliance of working groups established jointly by International Organization for Standardization, ISO and International Electrotechnical Commission, IEC that sets standards for media coding, includ ...

, AVC and HEVC) and audio (such as MP3

MP3 (formally MPEG-1 Audio Layer III or MPEG-2 Audio Layer III) is a coding format for digital audio developed largely by the Fraunhofer Society in Germany under the lead of Karlheinz Brandenburg. It was designed to greatly reduce the amount ...

, AAC and Vorbis

Vorbis is a free and open-source software project headed by the Xiph.Org Foundation. The project produces an audio coding format and software reference encoder/decoder ( codec) for lossy audio compression, libvorbis. Vorbis is most comm ...

).

Lossy image compression is used in digital camera

A digital camera, also called a digicam, is a camera that captures photographs in Digital data storage, digital memory. Most cameras produced today are digital, largely replacing those that capture images on photographic film or film stock. Dig ...

s, to increase storage capacities. Similarly, DVD

The DVD (common abbreviation for digital video disc or digital versatile disc) is a digital optical disc data storage format. It was invented and developed in 1995 and first released on November 1, 1996, in Japan. The medium can store any ki ...

s, Blu-ray

Blu-ray (Blu-ray Disc or BD) is a digital optical disc data storage format designed to supersede the DVD format. It was invented and developed in 2005 and released worldwide on June 20, 2006, capable of storing several hours of high-defin ...

and streaming video

Video on demand (VOD) is a media distribution system that allows users to access videos, television shows and films digitally on request. These multimedia are accessed without a traditional video playback device and a typical static broadcasting ...

use lossy video coding format

A video coding format (or sometimes video compression format) is a content representation format of digital video content, such as in a data file or bitstream. It typically uses a standardized video compression algorithm, most commonly based on ...

s. Lossy compression is extensively used in video.

In lossy audio compression, methods of psychoacoustics are used to remove non-audible (or less audible) components of the audio signal

An audio signal is a representation of sound, typically using either a changing level of electrical voltage for analog signals or a series of binary numbers for Digital signal (signal processing), digital signals. Audio signals have frequencies i ...

. Compression of human speech is often performed with even more specialized techniques; speech coding

Speech coding is an application of data compression to digital audio signals containing speech. Speech coding uses speech-specific parameter estimation using audio signal processing techniques to model the speech signal, combined with generic da ...

is distinguished as a separate discipline from general-purpose audio compression. Speech coding is used in internet telephony

Voice over Internet Protocol (VoIP), also known as IP telephony, is a set of technologies used primarily for voice communication sessions over Internet Protocol (IP) networks, such as the Internet. VoIP enables voice calls to be transmitted as ...

, for example, audio compression is used for CD ripping and is decoded by the audio players.

Lossy compression can cause generation loss

Generation loss is the loss of quality between subsequent copies or transcodes of data. Anything that reduces the quality of the representation when copying, and would cause further reduction in quality on making a copy of the copy, can be con ...

.

Theory

The theoretical basis for compression is provided byinformation theory

Information theory is the mathematical study of the quantification (science), quantification, Data storage, storage, and telecommunications, communication of information. The field was established and formalized by Claude Shannon in the 1940s, ...

and, more specifically, Shannon's source coding theorem; domain-specific theories include algorithmic information theory for lossless compression and rate–distortion theory

Rate–distortion theory is a major branch of information theory which provides the theoretical foundations for lossy data compression; it addresses the problem of determining the minimal number of bits per symbol, as measured by the rate ''R'' ...

for lossy compression. These areas of study were essentially created by Claude Shannon

Claude Elwood Shannon (April 30, 1916 – February 24, 2001) was an American mathematician, electrical engineer, computer scientist, cryptographer and inventor known as the "father of information theory" and the man who laid the foundations of th ...

, who published fundamental papers on the topic in the late 1940s and early 1950s. Other topics associated with compression include coding theory

Coding theory is the study of the properties of codes and their respective fitness for specific applications. Codes are used for data compression, cryptography, error detection and correction, data transmission and computer data storage, data sto ...

and statistical inference

Statistical inference is the process of using data analysis to infer properties of an underlying probability distribution.Upton, G., Cook, I. (2008) ''Oxford Dictionary of Statistics'', OUP. . Inferential statistical analysis infers properties of ...

.

Machine learning

There is a close connection betweenmachine learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task ( ...

and compression. A system that predicts the posterior probabilities of a sequence given its entire history can be used for optimal data compression (by using arithmetic coding

Arithmetic coding (AC) is a form of entropy encoding used in lossless data compression. Normally, a String (computer science), string of characters is represented using a fixed number of bits per character, as in the American Standard Code for In ...

on the output distribution). Conversely, an optimal compressor can be used for prediction (by finding the symbol that compresses best, given the previous history). This equivalence has been used as a justification for using data compression as a benchmark for "general intelligence".

An alternative view can show compression algorithms implicitly map strings into implicit feature space vectors, and compression-based similarity measures compute similarity within these feature spaces. For each compressor C(.) we define an associated vector space ℵ, such that C(.) maps an input string x, corresponding to the vector norm , , ~x, , . An exhaustive examination of the feature spaces underlying all compression algorithms is precluded by space; instead, feature vectors chooses to examine three representative lossless compression methods, LZW, LZ77, and PPM.

According to AIXI theory, a connection more directly explained in Hutter Prize, the best possible compression of x is the smallest possible software that generates x. For example, in that model, a zip file's compressed size includes both the zip file and the unzipping software, since you can not unzip it without both, but there may be an even smaller combined form.

Examples of AI-powered audio/video compression software include NVIDIA Maxine, AIVC. Examples of software that can perform AI-powered image compression include OpenCV

OpenCV (Open Source Computer Vision Library) is a Library (computing), library of programming functions mainly for Real-time computing, real-time computer vision. Originally developed by Intel, it was later supported by Willow Garage, then Itseez ...

, TensorFlow

TensorFlow is a Library (computing), software library for machine learning and artificial intelligence. It can be used across a range of tasks, but is used mainly for Types of artificial neural networks#Training, training and Statistical infer ...

, MATLAB

MATLAB (an abbreviation of "MATrix LABoratory") is a proprietary multi-paradigm programming language and numeric computing environment developed by MathWorks. MATLAB allows matrix manipulations, plotting of functions and data, implementat ...

's Image Processing Toolbox (IPT) and High-Fidelity Generative Image Compression.

In unsupervised machine learning, k-means clustering

''k''-means clustering is a method of vector quantization, originally from signal processing, that aims to partition of a set, partition ''n'' observations into ''k'' clusters in which each observation belongs to the cluster (statistics), cluste ...

can be utilized to compress data by grouping similar data points into clusters. This technique simplifies handling extensive datasets that lack predefined labels and finds widespread use in fields such as image compression

Image compression is a type of data compression applied to digital images, to reduce their cost for computer data storage, storage or data transmission, transmission. Algorithms may take advantage of visual perception and the statistical properti ...

.

Data compression aims to reduce the size of data files, enhancing storage efficiency and speeding up data transmission. K-means clustering, an unsupervised machine learning algorithm, is employed to partition a dataset into a specified number of clusters, k, each represented by the centroid

In mathematics and physics, the centroid, also known as geometric center or center of figure, of a plane figure or solid figure is the arithmetic mean position of all the points in the figure. The same definition extends to any object in n-d ...

of its points. This process condenses extensive datasets into a more compact set of representative points. Particularly beneficial in image

An image or picture is a visual representation. An image can be Two-dimensional space, two-dimensional, such as a drawing, painting, or photograph, or Three-dimensional space, three-dimensional, such as a carving or sculpture. Images may be di ...

and signal processing

Signal processing is an electrical engineering subfield that focuses on analyzing, modifying and synthesizing ''signals'', such as audio signal processing, sound, image processing, images, Scalar potential, potential fields, Seismic tomograph ...

, k-means clustering aids in data reduction by replacing groups of data points with their centroids, thereby preserving the core information of the original data while significantly decreasing the required storage space.

Large language model

A large language model (LLM) is a language model trained with self-supervised machine learning on a vast amount of text, designed for natural language processing tasks, especially language generation.

The largest and most capable LLMs are g ...

s (LLMs) are also efficient lossless data compressors on some data sets, as demonstrated by DeepMind

DeepMind Technologies Limited, trading as Google DeepMind or simply DeepMind, is a British–American artificial intelligence research laboratory which serves as a subsidiary of Alphabet Inc. Founded in the UK in 2010, it was acquired by Go ...

's research with the Chinchilla 70B model. Developed by DeepMind, Chinchilla 70B effectively compressed data, outperforming conventional methods such as Portable Network Graphics

Portable Network Graphics (PNG, officially pronounced , colloquially pronounced ) is a raster graphics, raster-graphics file graphics file format, format that supports lossless data compression. PNG was developed as an improved, non-patented ...

(PNG) for images and Free Lossless Audio Codec

FLAC (; Free Lossless Audio Codec) is an audio coding format for lossless compression of digital audio, developed by the Xiph.Org Foundation, and is also the name of the free software project producing the FLAC tools, the reference softwar ...

(FLAC) for audio. It achieved compression of image and audio data to 43.4% and 16.4% of their original sizes, respectively. There is, however, some reason to be concerned that the data set used for testing overlaps the LLM training data set, making it possible that the Chinchilla 70B model is only an efficient compression tool on data it has already been trained on.

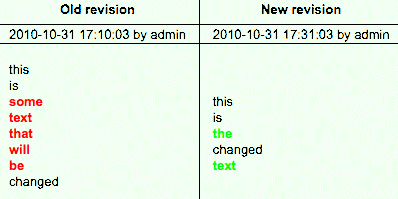

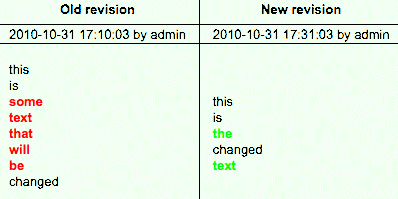

Data differencing

Data compression can be viewed as a special case of data differencing. Data differencing consists of producing a ''difference'' given a ''source'' and a ''target,'' with patching reproducing the ''target'' given a ''source'' and a ''difference.'' Since there is no separate source and target in data compression, one can consider data compression as data differencing with empty source data, the compressed file corresponding to a difference from nothing. This is the same as considering absolute

Data compression can be viewed as a special case of data differencing. Data differencing consists of producing a ''difference'' given a ''source'' and a ''target,'' with patching reproducing the ''target'' given a ''source'' and a ''difference.'' Since there is no separate source and target in data compression, one can consider data compression as data differencing with empty source data, the compressed file corresponding to a difference from nothing. This is the same as considering absolute entropy

Entropy is a scientific concept, most commonly associated with states of disorder, randomness, or uncertainty. The term and the concept are used in diverse fields, from classical thermodynamics, where it was first recognized, to the micros ...

(corresponding to data compression) as a special case of relative entropy

Relative may refer to:

General use

*Kinship and family, the principle binding the most basic social units of society. If two people are connected by circumstances of birth, they are said to be ''relatives''.

Philosophy

*Relativism, the concept t ...

(corresponding to data differencing) with no initial data.

The term ''differential compression'' is used to emphasize the data differencing connection.

Uses

Image

Entropy coding

In information theory, an entropy coding (or entropy encoding) is any lossless data compression method that attempts to approach the lower bound declared by Shannon's source coding theorem, which states that any lossless data compression method ...

originated in the 1940s with the introduction of Shannon–Fano coding, the basis for Huffman coding

In computer science and information theory, a Huffman code is a particular type of optimal prefix code that is commonly used for lossless data compression. The process of finding or using such a code is Huffman coding, an algorithm developed by ...

which was developed in 1950. Transform coding dates back to the late 1960s, with the introduction of fast Fourier transform

A fast Fourier transform (FFT) is an algorithm that computes the discrete Fourier transform (DFT) of a sequence, or its inverse (IDFT). A Fourier transform converts a signal from its original domain (often time or space) to a representation in ...

(FFT) coding in 1968 and the Hadamard transform

The Hadamard transform (also known as the Walsh–Hadamard transform, Hadamard–Rademacher–Walsh transform, Walsh transform, or Walsh–Fourier transform) is an example of a generalized class of Fourier transforms. It performs an orthogonal ...

in 1969.

An important image compression technique is the discrete cosine transform

A discrete cosine transform (DCT) expresses a finite sequence of data points in terms of a sum of cosine functions oscillating at different frequency, frequencies. The DCT, first proposed by Nasir Ahmed (engineer), Nasir Ahmed in 1972, is a widely ...

(DCT), a technique developed in the early 1970s. DCT is the basis for JPEG, a lossy compression

In information technology, lossy compression or irreversible compression is the class of data compression methods that uses inexact approximations and partial data discarding to represent the content. These techniques are used to reduce data size ...

format which was introduced by the Joint Photographic Experts Group

The Joint Photographic Experts Group (JPEG) is the joint committee between ISO/ IEC JTC 1/ SC 29 and ITU-T Study Group 16 that created and maintains the JPEG, JPEG 2000, JPEG XR, JPEG XT, JPEG XS, JPEG XL, and related digital image standard ...

(JPEG) in 1992. JPEG greatly reduces the amount of data required to represent an image at the cost of a relatively small reduction in image quality and has become the most widely used image file format

An image file format is a file format for a digital image. There are many formats that can be used, such as JPEG, PNG, and GIF. Most formats up until 2022 were for storing 2D images, not 3D ones. The data stored in an image file format may be co ...

. Its highly efficient DCT-based compression algorithm was largely responsible for the wide proliferation of digital image

A digital image is an image composed of picture elements, also known as pixels, each with '' finite'', '' discrete quantities'' of numeric representation for its intensity or gray level that is an output from its two-dimensional functions f ...

s and digital photos.

Lempel–Ziv–Welch

Lempel–Ziv–Welch (LZW) is a universal lossless data compression algorithm created by Abraham Lempel, Jacob Ziv, and Terry Welch. It was published by Welch in 1984 as an improved implementation of the LZ78 algorithm published by Lem ...

(LZW) is a lossless compression

Lossless compression is a class of data compression that allows the original data to be perfectly reconstructed from the compressed data with no loss of information. Lossless compression is possible because most real-world data exhibits statisti ...

algorithm developed in 1984. It is used in the GIF

The Graphics Interchange Format (GIF; or , ) is a Raster graphics, bitmap Image file formats, image format that was developed by a team at the online services provider CompuServe led by American computer scientist Steve Wilhite and released ...

format, introduced in 1987. DEFLATE, a lossless compression algorithm specified in 1996, is used in the Portable Network Graphics

Portable Network Graphics (PNG, officially pronounced , colloquially pronounced ) is a raster graphics, raster-graphics file graphics file format, format that supports lossless data compression. PNG was developed as an improved, non-patented ...

(PNG) format.

Wavelet compression, the use of wavelet

A wavelet is a wave-like oscillation with an amplitude that begins at zero, increases or decreases, and then returns to zero one or more times. Wavelets are termed a "brief oscillation". A taxonomy of wavelets has been established, based on the n ...

s in image compression, began after the development of DCT coding. The JPEG 2000

JPEG 2000 (JP2) is an image compression standard and coding system. It was developed from 1997 to 2000 by a Joint Photographic Experts Group committee chaired by Touradj Ebrahimi (later the JPEG president), with the intention of superseding their ...

standard was introduced in 2000. In contrast to the DCT algorithm used by the original JPEG format, JPEG 2000 instead uses discrete wavelet transform

In numerical analysis and functional analysis

Functional analysis is a branch of mathematical analysis, the core of which is formed by the study of vector spaces endowed with some kind of limit-related structure (for example, Inner product sp ...

(DWT) algorithms. JPEG 2000 technology, which includes the Motion JPEG 2000 extension, was selected as the video coding standard for digital cinema

Digital cinema is the digital technology used within the film industry to distribute or project motion pictures as opposed to the historical use of reels of motion picture film, such as 35 mm film. Whereas film reels have to be shipped to mo ...

in 2004.

Audio

Audio data compression, not to be confused withdynamic range compression

Dynamic range compression (DRC) or simply compression is an audio signal processing operation that reduces the volume of loud sounds or amplifies quiet sounds, thus reducing or ''compressing'' an audio signal's dynamic range. Compression is c ...

, has the potential to reduce the transmission bandwidth and storage requirements of audio data. Audio compression formats compression algorithms are implemented in software

Software consists of computer programs that instruct the Execution (computing), execution of a computer. Software also includes design documents and specifications.

The history of software is closely tied to the development of digital comput ...

as audio codec

A codec is a computer hardware or software component that encodes or decodes a data stream or signal. ''Codec'' is a portmanteau of coder/decoder.

In electronic communications, an endec is a device that acts as both an encoder and a decoder o ...

s. In both lossy and lossless compression, information redundancy is reduced, using methods such as coding, quantization, DCT and linear prediction to reduce the amount of information used to represent the uncompressed data.

Lossy audio compression algorithms provide higher compression and are used in numerous audio applications including Vorbis

Vorbis is a free and open-source software project headed by the Xiph.Org Foundation. The project produces an audio coding format and software reference encoder/decoder ( codec) for lossy audio compression, libvorbis. Vorbis is most comm ...

and MP3

MP3 (formally MPEG-1 Audio Layer III or MPEG-2 Audio Layer III) is a coding format for digital audio developed largely by the Fraunhofer Society in Germany under the lead of Karlheinz Brandenburg. It was designed to greatly reduce the amount ...

. These algorithms almost all rely on psychoacoustics

Psychoacoustics is the branch of psychophysics involving the scientific study of the perception of sound by the human auditory system. It is the branch of science studying the psychological responses associated with sound including noise, speech, ...

to eliminate or reduce fidelity of less audible sounds, thereby reducing the space required to store or transmit them.

The acceptable trade-off between loss of audio quality and transmission or storage size depends upon the application. For example, one 640 MB compact disc

The compact disc (CD) is a Digital media, digital optical disc data storage format co-developed by Philips and Sony to store and play digital audio recordings. It employs the Compact Disc Digital Audio (CD-DA) standard and was capable of hol ...

(CD) holds approximately one hour of uncompressed high fidelity

High fidelity (hi-fi or, rarely, HiFi) is the high-quality reproduction of sound. It is popular with audiophiles and home audio enthusiasts. Ideally, high-fidelity equipment has inaudible noise and distortion, and a flat (neutral, uncolored) ...

music, less than 2 hours of music compressed losslessly, or 7 hours of music compressed in the MP3

MP3 (formally MPEG-1 Audio Layer III or MPEG-2 Audio Layer III) is a coding format for digital audio developed largely by the Fraunhofer Society in Germany under the lead of Karlheinz Brandenburg. It was designed to greatly reduce the amount ...

format at a medium bit rate

In telecommunications and computing, bit rate (bitrate or as a variable ''R'') is the number of bits that are conveyed or processed per unit of time.

The bit rate is expressed in the unit bit per second (symbol: bit/s), often in conjunction ...

. A digital sound recorder can typically store around 200 hours of clearly intelligible speech in 640 MB.

Lossless audio compression produces a representation of digital data that can be decoded to an exact digital duplicate of the original. Compression ratios are around 50–60% of the original size, which is similar to those for generic lossless data compression. Lossless codecs use curve fitting

Curve fitting is the process of constructing a curve, or mathematical function, that has the best fit to a series of data points, possibly subject to constraints. Curve fitting can involve either interpolation, where an exact fit to the data is ...

or linear prediction as a basis for estimating the signal. Parameters describing the estimation and the difference between the estimation and the actual signal are coded separately.

A number of lossless audio compression formats exist. See list of lossless codecs for a listing. Some formats are associated with a distinct system, such as Direct Stream Transfer, used in Super Audio CD

Super Audio CD (SACD) is an optical disc format for audio storage introduced in 1999. It was developed jointly by Sony and Philips Electronics and intended to be the successor to the compact disc (CD) format.

The SACD format allows multiple a ...

and Meridian Lossless Packing

Meridian Lossless Packing, also known as Packed PCM (PPCM), is a lossless compression technique for PCM audio data developed by Meridian Audio, Ltd. MLP is the standard lossless compression method for DVD-Audio content (often advertised with t ...

, used in DVD-Audio

DVD-Audio (commonly abbreviated as DVD-A) is a digital format for delivering high-fidelity audio content on a DVD. DVD-Audio uses most of the storage on the disc for high-quality audio and is not intended to be a video delivery format.

The ...

, Dolby TrueHD

Dolby TrueHD is a lossless, multi-channel audio codec developed by Dolby Laboratories for home video, used principally in Blu-ray Disc and compatible hardware. Dolby TrueHD, along with Dolby Digital Plus (E-AC-3) and Dolby AC-4, is one of th ...

, Blu-ray

Blu-ray (Blu-ray Disc or BD) is a digital optical disc data storage format designed to supersede the DVD format. It was invented and developed in 2005 and released worldwide on June 20, 2006, capable of storing several hours of high-defin ...

and HD DVD

HD DVD (short for High Density Digital Versatile Disc) is an obsolete high-density optical disc format for storing data and playback of high-definition video.

.

Some audio file format

An audio file format is a file format for storing digital audio data on a computer system. The bit layout of the audio data (excluding metadata) is called the audio coding format and can be uncompressed, or audio compression (data), compressed t ...

s feature a combination of a lossy format and a lossless correction; this allows stripping the correction to easily obtain a lossy file. Such formats include MPEG-4 SLS

MPEG-4 SLS, or MPEG-4 Scalable to Lossless as per International Organization for Standardization, ISO/International Electrotechnical Commission, IEC 14496-3:2005/Amd 3:2006 (Scalable Lossless Coding), is an extension to the MPEG-4 Part 3 (MPEG-4 ...

(Scalable to Lossless), WavPack

WavPack is a free and open-source lossless audio compression format and application implementing the format. It is unique in the way that it supports hybrid audio compression alongside normal compression which is similar to how FLAC works. ...

, and OptimFROG DualStream.

When audio files are to be processed, either by further compression or for editing

Editing is the process of selecting and preparing written language, written, Image editing, visual, Audio engineer, audible, or Film editing, cinematic material used by a person or an entity to convey a message or information. The editing p ...

, it is desirable to work from an unchanged original (uncompressed or losslessly compressed). Processing of a lossily compressed file for some purpose usually produces a final result inferior to the creation of the same compressed file from an uncompressed original. In addition to sound editing or mixing, lossless audio compression is often used for archival storage, or as master copies.

Lossy audio compression

Lossy audio compression is used in a wide range of applications. In addition to standalone audio-only applications of file playback in MP3 players or computers, digitally compressed audio streams are used in most video DVDs, digital television, streaming media on the

Lossy audio compression is used in a wide range of applications. In addition to standalone audio-only applications of file playback in MP3 players or computers, digitally compressed audio streams are used in most video DVDs, digital television, streaming media on the Internet

The Internet (or internet) is the Global network, global system of interconnected computer networks that uses the Internet protocol suite (TCP/IP) to communicate between networks and devices. It is a internetworking, network of networks ...

, satellite and cable radio, and increasingly in terrestrial radio broadcasts. Lossy compression typically achieves far greater compression than lossless compression, by discarding less-critical data based on psychoacoustic optimizations.

Psychoacoustics recognizes that not all data in an audio stream can be perceived by the human auditory system

The auditory system is the sensory system for the sense of hearing. It includes both the ear, sensory organs (the ears) and the auditory parts of the sensory system.

System overview

The outer ear funnels sound vibrations to the eardrum, incre ...

. Most lossy compression reduces redundancy by first identifying perceptually irrelevant sounds, that is, sounds that are very hard to hear. Typical examples include high frequencies or sounds that occur at the same time as louder sounds. Those irrelevant sounds are coded with decreased accuracy or not at all.

Due to the nature of lossy algorithms, audio quality

Sound quality is typically an assessment of the accuracy, fidelity, or Intelligibility (communication), intelligibility of sound, audio output from an electronic device. Quality can be measured objectively, such as when tools are used to gau ...

suffers a digital generation loss when a file is decompressed and recompressed. This makes lossy compression unsuitable for storing the intermediate results in professional audio engineering applications, such as sound editing and multitrack recording. However, lossy formats such as MP3

MP3 (formally MPEG-1 Audio Layer III or MPEG-2 Audio Layer III) is a coding format for digital audio developed largely by the Fraunhofer Society in Germany under the lead of Karlheinz Brandenburg. It was designed to greatly reduce the amount ...

are very popular with end-users as the file size is reduced to 5-20% of the original size and a megabyte can store about a minute's worth of music at adequate quality.

Several proprietary lossy compression algorithms have been developed that provide higher quality audio performance by using a combination of lossless and lossy algorithms with adaptive bit rates and lower compression ratios. Examples include aptX, LDAC, LHDC, MQA and SCL6.

= Coding methods

= To determine what information in an audio signal is perceptually irrelevant, most lossy compression algorithms use transforms such as themodified discrete cosine transform

The modified discrete cosine transform (MDCT) is a transform based on the type-IV discrete cosine transform (DCT-IV), with the additional property of being lapped: it is designed to be performed on consecutive blocks of a larger dataset, where s ...

(MDCT) to convert time domain

In mathematics and signal processing, the time domain is a representation of how a signal, function, or data set varies with time. It is used for the analysis of mathematical functions, physical signals or time series of economic or environmental ...

sampled waveforms into a transform domain, typically the frequency domain

In mathematics, physics, electronics, control systems engineering, and statistics, the frequency domain refers to the analysis of mathematical functions or signals with respect to frequency (and possibly phase), rather than time, as in time ser ...

. Once transformed, component frequencies can be prioritized according to how audible they are. Audibility of spectral components is assessed using the absolute threshold of hearing and the principles of simultaneous masking

In audio signal processing, auditory masking occurs when the perception of one sound is affected by the presence of another sound.Gelfand, S.A. (2004) ''Hearing – An Introduction to Psychological and Physiological Acoustics'' 4th Ed. New York, ...

—the phenomenon wherein a signal is masked by another signal separated by frequency—and, in some cases, temporal masking—where a signal is masked by another signal separated by time. Equal-loudness contour

An equal-loudness contour is a measure of sound pressure level, over the frequency spectrum, for which a listener perceives a constant loudness when presented with pure steady tones. The unit of measurement for loudness levels is the phon an ...

s may also be used to weigh the perceptual importance of components. Models of the human ear-brain combination incorporating such effects are often called psychoacoustic model

Psychoacoustics is the branch of psychophysics involving the scientific study of the perception of sound by the human auditory system. It is the branch of science studying the psychological responses associated with sound including noise, speech, ...

s.

Other types of lossy compressors, such as the linear predictive coding

Linear predictive coding (LPC) is a method used mostly in audio signal processing and speech processing for representing the spectral envelope of a digital signal of speech in compressed form, using the information of a linear predictive model ...

(LPC) used with speech, are source-based coders. LPC uses a model of the human vocal tract to analyze speech sounds and infer the parameters used by the model to produce them moment to moment. These changing parameters are transmitted or stored and used to drive another model in the decoder which reproduces the sound.

Lossy formats are often used for the distribution of streaming audio or interactive communication (such as in cell phone networks). In such applications, the data must be decompressed as the data flows, rather than after the entire data stream has been transmitted. Not all audio codecs can be used for streaming applications.

Latency is introduced by the methods used to encode and decode the data. Some codecs will analyze a longer segment, called a ''frame'', of the data to optimize efficiency, and then code it in a manner that requires a larger segment of data at one time to decode. The inherent latency of the coding algorithm can be critical; for example, when there is a two-way transmission of data, such as with a telephone conversation, significant delays may seriously degrade the perceived quality.

In contrast to the speed of compression, which is proportional to the number of operations required by the algorithm, here latency refers to the number of samples that must be analyzed before a block of audio is processed. In the minimum case, latency is zero samples (e.g., if the coder/decoder simply reduces the number of bits used to quantize the signal). Time domain algorithms such as LPC also often have low latencies, hence their popularity in speech coding for telephony. In algorithms such as MP3, however, a large number of samples have to be analyzed to implement a psychoacoustic model in the frequency domain, and latency is on the order of 23 ms.

= Speech encoding

= Speech encoding is an important category of audio data compression. The perceptual models used to estimate what aspects of speech a human ear can hear are generally somewhat different from those used for music. The range of frequencies needed to convey the sounds of a human voice is normally far narrower than that needed for music, and the sound is normally less complex. As a result, speech can be encoded at high quality using a relatively low bit rate. This is accomplished, in general, by some combination of two approaches: * Only encoding sounds that could be made by a single human voice. * Throwing away more of the data in the signal—keeping just enough to reconstruct an "intelligible" voice rather than the full frequency range of humanhearing

Hearing, or auditory perception, is the ability to perceive sounds through an organ, such as an ear, by detecting vibrations as periodic changes in the pressure of a surrounding medium. The academic field concerned with hearing is auditory sci ...

.

The earliest algorithms used in speech encoding (and audio data compression in general) were the A-law algorithm

An A-law algorithm is a standard companding algorithm, used in European 8-bit PCM digital communications systems to optimize, i.e. modify, the dynamic range of an analog signal for digitizing. It is one of the two companding algorithms in th ...

and the μ-law algorithm

The μ-law algorithm (sometimes written Mu (letter), mu-law, often abbreviated as u-law) is a companding algorithm, primarily used in 8-bit PCM Digital data, digital telecommunications systems in North America and Japan. It is one of the two c ...

.

History

Early audio research was conducted at

Early audio research was conducted at Bell Labs

Nokia Bell Labs, commonly referred to as ''Bell Labs'', is an American industrial research and development company owned by Finnish technology company Nokia. With headquarters located in Murray Hill, New Jersey, Murray Hill, New Jersey, the compa ...

. There, in 1950, C. Chapin Cutler filed the patent on differential pulse-code modulation

Differential pulse-code modulation (DPCM) is a signal encoder that uses the baseline of pulse-code modulation (PCM) but adds some functionalities based on the prediction of the samples of the signal. The input can be an analog signal or a Digital ...

(DPCM). In 1973, Adaptive DPCM (ADPCM) was introduced by P. Cummiskey, Nikil S. Jayant and James L. Flanagan.

Perceptual coding

Psychoacoustics is the branch of psychophysics involving the scientific study of the perception of sound by the human auditory system. It is the branch of science studying the psychological responses associated with sound including noise, speech, ...

was first used for speech coding

Speech coding is an application of data compression to digital audio signals containing speech. Speech coding uses speech-specific parameter estimation using audio signal processing techniques to model the speech signal, combined with generic da ...

compression, with linear predictive coding

Linear predictive coding (LPC) is a method used mostly in audio signal processing and speech processing for representing the spectral envelope of a digital signal of speech in compressed form, using the information of a linear predictive model ...

(LPC). Initial concepts for LPC date back to the work of Fumitada Itakura is a Japanese scientist. He did pioneering work in statistical signal processing, and its application to speech analysis, synthesis and coding, including the development of the linear predictive coding (LPC) and line spectral pairs (LSP) metho ...

(Nagoya University

, abbreviated to or NU, is a Japanese national research university located in Chikusa-ku, Nagoya.

It was established in 1939 as the last of the nine Imperial Universities in the then Empire of Japan, and is now a Designated National Universit ...

) and Shuzo Saito (Nippon Telegraph and Telephone

(NTT) is a Japanese telecommunications holding company headquartered in Tokyo, Japan. Ranked 55th in ''Fortune'' Global 500, NTT is the fourth largest telecommunications company in the world in terms of revenue, as well as the third largest pu ...

) in 1966. During the 1970s, Bishnu S. Atal and Manfred R. Schroeder at Bell Labs

Nokia Bell Labs, commonly referred to as ''Bell Labs'', is an American industrial research and development company owned by Finnish technology company Nokia. With headquarters located in Murray Hill, New Jersey, Murray Hill, New Jersey, the compa ...

developed a form of LPC called adaptive predictive coding (APC), a perceptual coding algorithm that exploited the masking properties of the human ear, followed in the early 1980s with the code-excited linear prediction (CELP) algorithm which achieved a significant compression ratio

The compression ratio is the ratio between the maximum and minimum volume during the compression stage of the power cycle in a piston or Wankel engine.

A fundamental specification for such engines, it can be measured in two different ways. Th ...

for its time. Perceptual coding is used by modern audio compression formats such as MP3

MP3 (formally MPEG-1 Audio Layer III or MPEG-2 Audio Layer III) is a coding format for digital audio developed largely by the Fraunhofer Society in Germany under the lead of Karlheinz Brandenburg. It was designed to greatly reduce the amount ...

and AAC.

Discrete cosine transform

A discrete cosine transform (DCT) expresses a finite sequence of data points in terms of a sum of cosine functions oscillating at different frequency, frequencies. The DCT, first proposed by Nasir Ahmed (engineer), Nasir Ahmed in 1972, is a widely ...

(DCT), developed by Nasir Ahmed, T. Natarajan and K. R. Rao in 1974, provided the basis for the modified discrete cosine transform

The modified discrete cosine transform (MDCT) is a transform based on the type-IV discrete cosine transform (DCT-IV), with the additional property of being lapped: it is designed to be performed on consecutive blocks of a larger dataset, where s ...

(MDCT) used by modern audio compression formats such as MP3, Dolby Digital

Dolby Digital, originally synonymous with Dolby AC-3 (see below), is the name for a family of audio compression technologies developed by Dolby Laboratories. Called Dolby Stereo Digital until 1995, it is lossy compression (except for Dolby Tr ...

, and AAC. MDCT was proposed by J. P. Princen, A. W. Johnson and A. B. Bradley in 1987, following earlier work by Princen and Bradley in 1986.

The world's first commercial broadcast automation

Broadcast automation incorporates the use of broadcast programming technology to automate broadcasting operations. Used either at a broadcast network, radio station or a television station, it can run a facility in the absence of a human oper ...

audio compression system was developed by Oscar Bonello, an engineering professor at the University of Buenos Aires

The University of Buenos Aires (, UBA) is a public university, public research university in Buenos Aires, Argentina. It is the second-oldest university in the country, and the largest university of the country by enrollment. Established in 1821 ...

.

In 1983, using the psychoacoustic principle of the masking of critical bands first published in 1967, he started developing a practical application based on the recently developed IBM PC

The IBM Personal Computer (model 5150, commonly known as the IBM PC) is the first microcomputer released in the List of IBM Personal Computer models, IBM PC model line and the basis for the IBM PC compatible ''de facto'' standard. Released on ...

computer, and the broadcast automation system was launched in 1987 under the name Audicom

Audicom was the first system to record and play broadcast-quality audio from an IBM-compatible personal computer, beginning in 1988 the era of digital recording that would eventually eliminate magnetic tape recording used previously for half a ce ...

.

35 years later, almost all the radio stations in the world were using this technology manufactured by a number of companies because the inventor refused to patent his work, preferring to publish it and leave it in the public domain.

A literature compendium for a large variety of audio coding systems was published in the IEEE's ''Journal on Selected Areas in Communications'' (''JSAC''), in February 1988. While there were some papers from before that time, this collection documented an entire variety of finished, working audio coders, nearly all of them using perceptual techniques and some kind of frequency analysis and back-end noiseless coding.

Video

Uncompressed video

Uncompressed video is digital video that either has never been compressed or was generated by decompressing previously compressed digital video. It is commonly used by video cameras, video monitors, video recording devices (including general-pur ...

requires a very high data rate. Although lossless video compression codecs perform at a compression factor of 5 to 12, a typical H.264 lossy compression video has a compression factor between 20 and 200.

The two key video compression techniques used in video coding standards

A video coding format (or sometimes video compression format) is a content representation format of digital video content, such as in a data file or bitstream. It typically uses a standardized video compression algorithm, most commonly based on ...

are the DCT and motion compensation

Motion compensation in computing is an algorithmic technique used to predict a frame in a video given the previous and/or future frames by accounting for motion of the camera and/or objects in the video. It is employed in the encoding of video ...

(MC). Most video coding standards, such as the H.26x

The Video Coding Experts Group or Visual Coding Experts Group (VCEG, also known as Question 6) is a working group of the ITU Telecommunication Standardization Sector (ITU-T) concerned with standards for compression coding of video, images, audio ...

and MPEG

The Moving Picture Experts Group (MPEG) is an alliance of working groups established jointly by International Organization for Standardization, ISO and International Electrotechnical Commission, IEC that sets standards for media coding, includ ...

formats, typically use motion-compensated DCT video coding (block motion compensation).

Most video codecs are used alongside audio compression techniques to store the separate but complementary data streams as one combined package using so-called ''container format

A container format (informally, sometimes called a wrapper) or metafile is a file format that allows multiple data streams to be embedded into a single file, usually along with metadata for identifying and further detailing those streams. Nota ...

s''.

Encoding theory

Video data may be represented as a series of still image frames. Such data usually contains abundant amounts of spatial and temporal redundancy. Video compression algorithms attempt to reduce redundancy and store information more compactly. Most video compression formats andcodecs

A codec is a computer hardware or software component that encodes or decodes a data stream or signal. ''Codec'' is a portmanteau of coder/decoder.

In electronic communications, an endec is a device that acts as both an encoder and a decoder o ...

exploit both spatial and temporal redundancy (e.g. through difference coding with motion compensation

Motion compensation in computing is an algorithmic technique used to predict a frame in a video given the previous and/or future frames by accounting for motion of the camera and/or objects in the video. It is employed in the encoding of video ...

). Similarities can be encoded by only storing differences between e.g. temporally adjacent frames (inter-frame coding) or spatially adjacent pixels (intra-frame coding). Inter-frame compression (a temporal delta encoding

Delta encoding is a way of storing or transmitting data in the form of '' differences'' (deltas) between sequential data rather than complete files; more generally this is known as data differencing. Delta encoding is sometimes called delta comp ...

) (re)uses data from one or more earlier or later frames in a sequence to describe the current frame. Intra-frame coding

Intra-frame coding is a data compression technique used within a video frame, enabling smaller file sizes and lower bitrates, with little or no loss in quality. Since neighboring pixels within an image are often very similar, rather than storing ...

, on the other hand, uses only data from within the current frame, effectively being still-image compression.

The intra-frame video coding formats used in camcorders and video editing employ simpler compression that uses only intra-frame prediction. This simplifies video editing software, as it prevents a situation in which a compressed frame refers to data that the editor has deleted.

Usually, video compression additionally employs lossy compression

In information technology, lossy compression or irreversible compression is the class of data compression methods that uses inexact approximations and partial data discarding to represent the content. These techniques are used to reduce data size ...

techniques like quantization that reduce aspects of the source data that are (more or less) irrelevant to the human visual perception by exploiting perceptual features of human vision. For example, small differences in color are more difficult to perceive than are changes in brightness. Compression algorithms can average a color across these similar areas in a manner similar to those used in JPEG image compression. As in all lossy compression, there is a trade-off

A trade-off (or tradeoff) is a situational decision that involves diminishing or losing on quality, quantity, or property of a set or design in return for gains in other aspects. In simple terms, a tradeoff is where one thing increases, and anoth ...

between video quality

Video quality is a characteristic of a video passed through a video transmission or processing system that describes perceived video degradation (typically compared to the original video). Video processing systems may introduce some amount of disto ...

and bit rate

In telecommunications and computing, bit rate (bitrate or as a variable ''R'') is the number of bits that are conveyed or processed per unit of time.

The bit rate is expressed in the unit bit per second (symbol: bit/s), often in conjunction ...

, cost of processing the compression and decompression, and system requirements. Highly compressed video may present visible or distracting artifacts.

Other methods other than the prevalent DCT-based transform formats, such as fractal compression, matching pursuit and the use of a discrete wavelet transform

In numerical analysis and functional analysis

Functional analysis is a branch of mathematical analysis, the core of which is formed by the study of vector spaces endowed with some kind of limit-related structure (for example, Inner product sp ...

(DWT), have been the subject of some research, but are typically not used in practical products. Wavelet compression is used in still-image coders and video coders without motion compensation. Interest in fractal compression seems to be waning, due to recent theoretical analysis showing a comparative lack of effectiveness of such methods.

= Inter-frame coding

= In inter-frame coding, individual frames of a video sequence are compared from one frame to the next, and the video compression codec records the differences to the reference frame. If the frame contains areas where nothing has moved, the system can simply issue a short command that copies that part of the previous frame into the next one. If sections of the frame move in a simple manner, the compressor can emit a (slightly longer) command that tells the decompressor to shift, rotate, lighten, or darken the copy. This longer command still remains much shorter than data generated by intra-frame compression. Usually, the encoder will also transmit a residue signal which describes the remaining more subtle differences to the reference imagery. Using entropy coding, these residue signals have a more compact representation than the full signal. In areas of video with more motion, the compression must encode more data to keep up with the larger number of pixels that are changing. Commonly during explosions, flames, flocks of animals, and in some panning shots, the high-frequency detail leads to quality decreases or to increases in thevariable bitrate

Variable bitrate (VBR) is a term used in telecommunications and computing that relates to the bitrate used in sound or video encoding. As opposed to constant bitrate (CBR), VBR files vary the amount of output data per time segment. VBR allows ...

.

Hybrid block-based transform formats

ITU-T

The International Telecommunication Union Telecommunication Standardization Sector (ITU-T) is one of the three Sectors (branches) of the International Telecommunication Union (ITU). It is responsible for coordinating Standardization, standards fo ...

or ISO

The International Organization for Standardization (ISO ; ; ) is an independent, non-governmental, international standard development organization composed of representatives from the national standards organizations of member countries.

Me ...

) share the same basic architecture that dates back to H.261 which was standardized in 1988 by the ITU-T. They mostly rely on the DCT, applied to rectangular blocks of neighboring pixels, and temporal prediction using motion vectors, as well as nowadays also an in-loop filtering step.

In the prediction stage, various deduplication and difference-coding techniques are applied that help decorrelate data and describe new data based on already transmitted data.

Then rectangular blocks of remaining pixel

In digital imaging, a pixel (abbreviated px), pel, or picture element is the smallest addressable element in a Raster graphics, raster image, or the smallest addressable element in a dot matrix display device. In most digital display devices, p ...

data are transformed to the frequency domain. In the main lossy processing stage, frequency domain data gets quantized in order to reduce information that is irrelevant to human visual perception.

In the last stage statistical redundancy gets largely eliminated by an entropy coder which often applies some form of arithmetic coding.

In an additional in-loop filtering stage various filters can be applied to the reconstructed image signal. By computing these filters also inside the encoding loop they can help compression because they can be applied to reference material before it gets used in the prediction process and they can be guided using the original signal. The most popular example are deblocking filters that blur out blocking artifacts from quantization discontinuities at transform block boundaries.

History

In 1967, A.H. Robinson and C. Cherry proposed arun-length encoding

Run-length encoding (RLE) is a form of lossless data compression in which ''runs'' of data (consecutive occurrences of the same data value) are stored as a single occurrence of that data value and a count of its consecutive occurrences, rather th ...

bandwidth compression scheme for the transmission of analog television signals. The DCT, which is fundamental to modern video compression, was introduced by Nasir Ahmed, T. Natarajan and K. R. Rao in 1974.

H.261, which debuted in 1988, commercially introduced the prevalent basic architecture of video compression technology. It was the first video coding format

A video coding format (or sometimes video compression format) is a content representation format of digital video content, such as in a data file or bitstream. It typically uses a standardized video compression algorithm, most commonly based on ...

based on DCT compression. H.261 was developed by a number of companies, including Hitachi

() is a Japanese Multinational corporation, multinational Conglomerate (company), conglomerate founded in 1910 and headquartered in Chiyoda, Tokyo. The company is active in various industries, including digital systems, power and renewable ener ...

, PictureTel, NTT, BT and Toshiba

is a Japanese multinational electronics company headquartered in Minato, Tokyo. Its diversified products and services include power, industrial and social infrastructure systems, elevators and escalators, electronic components, semiconductors ...

.

The most popular video coding standards used for codecs have been the MPEG

The Moving Picture Experts Group (MPEG) is an alliance of working groups established jointly by International Organization for Standardization, ISO and International Electrotechnical Commission, IEC that sets standards for media coding, includ ...

standards. MPEG-1

MPEG-1 is a Technical standard, standard for lossy compression of video and Audio frequency, audio. It is designed to compress VHS-quality raw digital video and CD audio down to about 1.5 Mbit/s (26:1 and 6:1 compression ratios respectively ...

was developed by the Motion Picture Experts Group (MPEG) in 1991, and it was designed to compress VHS-quality video. It was succeeded in 1994 by MPEG-2

MPEG-2 (a.k.a. H.222/H.262 as was defined by the ITU) is a standard for "the generic coding of moving pictures and associated audio information". It describes a combination of lossy video compression and lossy audio data compression methods ...

/ H.262, which was developed by a number of companies, primarily Sony

is a Japanese multinational conglomerate (company), conglomerate headquartered at Sony City in Minato, Tokyo, Japan. The Sony Group encompasses various businesses, including Sony Corporation (electronics), Sony Semiconductor Solutions (i ...

, Thomson and Mitsubishi Electric

is a Japanese Multinational corporation, multinational electronics and electrical equipment manufacturing company headquartered in Tokyo, Japan. The company was established in 1921 as a spin-off from the electrical machinery manufacturing d ...

. MPEG-2 became the standard video format for DVD

The DVD (common abbreviation for digital video disc or digital versatile disc) is a digital optical disc data storage format. It was invented and developed in 1995 and first released on November 1, 1996, in Japan. The medium can store any ki ...

and SD digital television. In 1999, it was followed by MPEG-4

MPEG-4 is a group of international standards for the compression of digital audio and visual data, multimedia systems, and file storage formats. It was originally introduced in late 1998 as a group of audio and video coding formats and related ...

/ H.263. It was also developed by a number of companies, primarily Mitsubishi Electric, Hitachi

() is a Japanese Multinational corporation, multinational Conglomerate (company), conglomerate founded in 1910 and headquartered in Chiyoda, Tokyo. The company is active in various industries, including digital systems, power and renewable ener ...

and Panasonic

is a Japanese multinational electronics manufacturer, headquartered in Kadoma, Osaka, Kadoma, Japan. It was founded in 1918 as in Fukushima-ku, Osaka, Fukushima by Kōnosuke Matsushita. The company was incorporated in 1935 and renamed and c ...

.

H.264/MPEG-4 AVC was developed in 2003 by a number of organizations, primarily Panasonic, Godo Kaisha IP Bridge and LG Electronics

LG Electronics Inc. () is a South Korean Multinational corporation, multinational major appliance and consumer electronics corporation headquartered in Yeouido-dong, Seoul, South Korea. LG Electronics is a part of LG, LG Corporation, the fourth ...

. AVC commercially introduced the modern context-adaptive binary arithmetic coding

Context-adaptive binary arithmetic coding (CABAC) is a form of entropy encoding used in the H.264/MPEG-4 AVC and High Efficiency Video Coding (HEVC) standards. It is a lossless compression technique, although the video coding standards in which it ...

(CABAC) and context-adaptive variable-length coding (CAVLC) algorithms. AVC is the main video encoding standard for Blu-ray Disc

Blu-ray (Blu-ray Disc or BD) is a Digital media, digital optical disc data storage format designed to supersede the DVD format. It was invented and developed in 2005 and released worldwide on June 20, 2006, capable of storing several hours of ...

s, and is widely used by video sharing websites and streaming internet services such as YouTube

YouTube is an American social media and online video sharing platform owned by Google. YouTube was founded on February 14, 2005, by Steve Chen, Chad Hurley, and Jawed Karim who were three former employees of PayPal. Headquartered in ...

, Netflix